Running a Kubernetes cluster doesn’t have to be expensive. In this article I discuss how I’ve set up a Kubernetes cluster that is affordable for personal projects.

The Aim

Ok, I admit it, I’m cheap. But is it too much to ask for a Kubernetes cluster for less than $20 pcm?

I’m a great fan of containerisation and the Kubernetes (k8s) orchestration system in particular, and I like messing about with personal projects, but I’ve always found hosting my own projects in a k8s cluster to be prohibitively expensive.

Why so expensive? Well, you have to pay for a VM (a node in k8s speak) with at least 2 vCPUs (being realistic); you often pay for the master node with a managed k8s service; ingress through a load balancer can be expensive; then there’s disk costs, public IP addresses and so on. It all mounts up. Try all this on AWS can you can easily be racking up over $100 pcm.

Compare this to any of the ubiquitous web hosting services, that use shared resources and only cost a few $s per month to host your website, and you see the problem. I know I’m not comparing like with like here, but I could always rent a VPS to run my own projects on, and that could easily fit within a budget of $20 pcm.

But, it’s not just running stuff that interests me, it’s how you run it and how you deploy it that’s half the fun. It’s the joy of finding cool open source tech, reaching for the nearest container image, integrating with other ideas using a bit of YAML config, hitting kubectl apply and watching as hey presto, I’m running my own X, Y & Z. If I want comments on my blogs, I don’t want to use a commenting service like Disqus, for which I have to pay to remove adverts, accept that it will slow down my website, track my visitors and do who knows what else with their personal data; I want to use Commento which is fast, does not show adverts, is open source and I can host myself easily using a pre-built container image.

So I recently had a go at finding a way to run Kubernetes affordably, and, while it’s not entirely pretty, it can be done. What follows is a description of the k8s cluster I’ve set up. It costs me 43p per day, or about $18(£13.50) pcm. I could definitely go cheaper, but I’m getting a pretty good service for that money, so I’m happy. I do of course welcome all ideas for improvement.

An Affordable Cluster

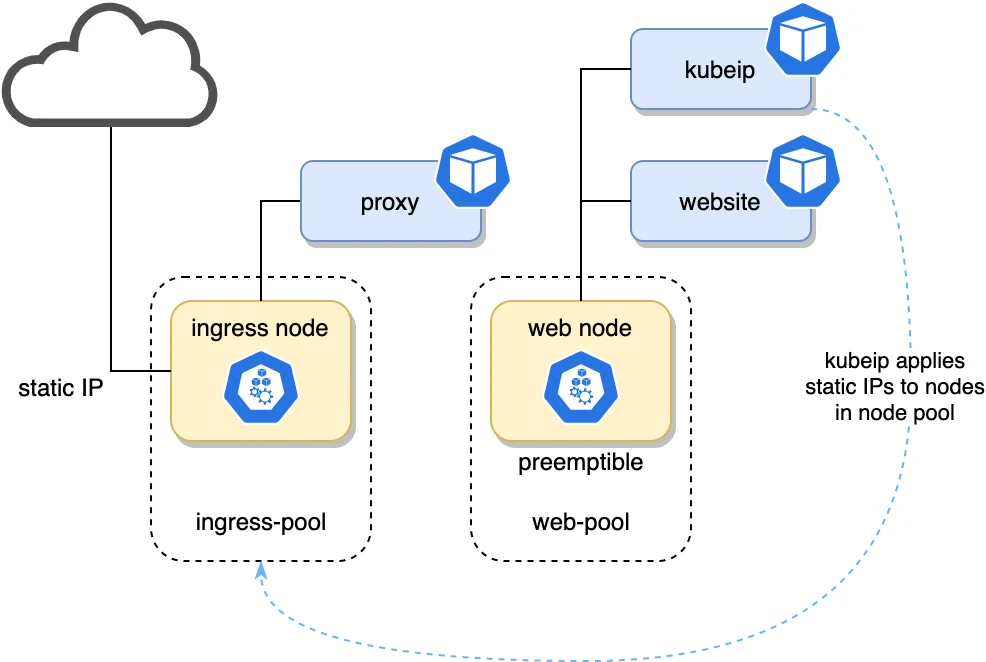

This is an overview of the cluster. I’ve removed everything except a website, reverse proxy and kubeip which I’ll discuss shortly.

Overview of an affordable Kubernetes cluster

In summary, I’ve achieved the low cost with the following approaches:

- Using a managed k8s provider who does not charge for the master node that controls the cluster

- Using GKE’s preemptible nodes, which are about a third of the price

- Not using a cloud load balancer for ingress

- Keeping storage low, and sharing disks where possible

How much does each of these save? Here’s a quick breakdown:

Master node for free

Many cloud providers don’t charge for the master node. At time of writing, Azure, IONOS, DigitalOcean, are just some that provide always free cluster management. Whether this will remain the case we’ll have to see. From June 2020, GCP’s GKE service started charging $0.10 per hour ($72 per month) for master nodes except for the first cluster of a billing account. AWS’s EKS charges $0.10 per hour even for your first cluster.

Saving: $0 vs. $72 per month

GKE preemptible nodes

Google’s GKE (Google Kubernetes Engine) is really excellent, IMHO. From a developer’s perspective I’d say it is the best, which isn’t surprising since they developed Kubernetes in the first place. One thing that makes GKE great is preemptible nodes.

Preemptible nodes are VMs that may be preempted (i.e. stopped) at any moment by Google. They are basically excess Google Compute Engine resource so will vary somewhat. Google says:

The probability that Compute Engine will stop a preemptible instance for a system event is generally low. Compute Engine always stops preemptible instances after they run for 24 hours.

However, they are quickly replaced with new nodes, so if you design your services to be fault tolerant and can accept a small amount of downtime then preemptible nodes are a huge cost saver.

Saving for an e2-medium: $7.34 vs. $24.46 per month. That’s a 70% saving!

Avoiding using a cloud load balancer

Provisioning a Cloud Load Balancer is by far the easiest way to manage ingress into your cluster, but damn it they’re expensive! Obviously they come with lots of capabilities, and are essential for any production system, but for personal projects they are just too pricey in my opinion. GCP charges $0.025 per hour, which works out around $18 per month.

The difficulty of managing ingress yourself, e.g. using nginx, is that the Kubernetes node (VM) you run it on will have an ephemeral IP address. Dynamic DNS is a possible solution, but I assume would result in significant periods of time when DNS servers point at the wrong place.

Enter kubeip. This open source golang service assigns IPs to GKE nodes from a pool of static external IP addresses. More on this below.

Saving: $0 vs $18 per month

(Disclaimer: in my set up detailed below, I dedicate an e2-micro instance to ingress which is $6.11 per month. Still a big saving though.)

Reduce persistent storage allocation

For personal projects I avoid SSD storage and stick with HDD. In GCE, HDD storage is $0.04 per GB/month. Pretty cheap, but the default disk size for nodes in GKE is 100GB, which is $4 per month.

Also, the minimum size HDD you can allocate in GCE is 10GB, or $0.40 per month. Not very much you might argue, but if you want to run a bunch of containers that need persistence storage, this again can soon mount up.

My approach: allocate GKE nodes with 10-20GB disks, and share persistent storage where possible using NFS (this will be the subject of a future blog).

Saving is cluster-size & service dependent, but for my particular setup, where I have a few different services running that require persistence, all sharing a 10GB disk, it’s approx: $1.60 vs. $8 per month.

Cost of the Cluster

There are undoubtedly more savings that can be made, but this is the cost breakdown of my cluster right now:

| Resource | Cost per month |

|---|---|

| Ingress Node - e2-micro | $6.11 |

| Main node pool - 1 x e2-medium preemptible | $7.34 |

| 1 external static IP | $2.88 |

| 40GB total HDD | $1.60 |

| TOTAL | $17.93 |

In UK money, this is about £13.50 pcm or 43p per day.

Details

For the following we’ll assume we’re going to create a k8s cluster in GKE called my-cluster, and host a website at my-cluster.co.uk (domain is available BTW).

Step 1: create cluster and 2 node pools

Use GCP’s GKE. This is needed for preemptible nodes. Create the my-cluster cluster in the cheapest region, such as us-central1.

Create 2 node pools:

ingress-pool:- 1 x e2-micro, with 10GB HDD

- runs just the nginx ingress container

- taint this node pool with

dedicated=ingress:NoSchedule

web-pool:- 1 x e2-medium, preemptible, with 20GB HDD

- runs kubeip & everything else

The ingress-pool is a where an nginx ingress will run, that will terminate TLS and proxy services in the cluster. This node will have its IP address set by kubeip to a static external IP address.

The web-pool is where everything else will run, including kubeip. For now this will be 1 node, but can be scaled up as necessary in the future.

Node tainting

We taint the ingress-pool so as to only allow the nginx ingress to run in this pool. Everything else, including kubeip, will run in the other pool.

By the way, this is a restriction of kubeip; you have to run it in a node pool other than the one you want to set the static IP(s) too. From the kubeip readme:

We recommend that KUBEIP_NODEPOOL should NOT be the same as KUBEIP_SELF_NODEPOOL

I did try it being the same pool, but it didn’t work.

When you create the ingress node pool you can apply the taint to the whole node pool, but can’t do this afterwards unfortunately. If, like me you forget, you can apply it afterwards to the ingress-pool’s single node something like this:

kubectl taint node gke-my-cluster-ingress-pool-69bb8d97-sft0 dedicated=ingress:NoSchedule

I’m not sure if this taint persists if my node gets replaced. Answers on a postcard… or in the comments please.

Disk size

The default disk size for nodes in GKE is 100GB. Assuming you’re not using a lot of host storage, such as via emptyDir volumes then I think you can get away with a lot less than this. Container images can take up a lot of space, but Kubernetes will purge unused images if the disk pressure becomes high.

I’ve gone for 10GB for the ingress node since I’m not planning on running anything else on it. For the web-pool node I’ve gone for 20GB. Maybe I could have got away with less, or maybe in a few months I’ll realise I needed more. We’ll see.

Step 2: Deploy kubeip

The next step is installing kubeip on the web-pool node so it sets the IP address of the ingress node to a static IP.

Detailed instructions for installing kubeip are on the kubeip GitHub page. However, this is what I did:

First clone the kubeip repo:

git clone https://github.com/doitintl/kubeip.git

Set some params:

gcloud config set project {insert-your-GCP-project-name-here}

export PROJECT_ID=$(gcloud config list --format 'value(core.project)')

export GCP_REGION=us-central1

export GKE_CLUSTER_NAME=my-cluster

export KUBEIP_NODEPOOL=ingress-pool

export KUBEIP_SELF_NODEPOOL=web-pool

Create kubeIP service account, role and iam policy binding:

gcloud iam service-accounts create kubeip-service-account --display-name "kubeIP"

gcloud iam roles create kubeip --project $PROJECT_ID --file roles.yaml

gcloud projects add-iam-policy-binding $PROJECT_ID --member serviceAccount:kubeip-service-account@$PROJECT_ID.iam.gserviceaccount.com --role projects/$PROJECT_ID/roles/kubeip

Get the service account key and stick it in a secret:

gcloud iam service-accounts keys create key.json --iam-account kubeip-service-account@$PROJECT_ID.iam.gserviceaccount.com

kubectl create secret generic kubeip-key --from-file=key.json -n kube-system

Give yourself cluster admin RBAC:

kubectl create clusterrolebinding cluster-admin-binding \

--clusterrole cluster-admin --user `gcloud config list --format 'value(core.account)'`

Create IP addresses and label them. I only create 1 here since there’s only 1 node in the ingress pool:

for i in {1..1}; do gcloud compute addresses create kubeip-ip$i --project=$PROJECT_ID --region=$GCP_REGION; done

for i in {1..1}; do gcloud beta compute addresses update kubeip-ip$i --update-labels kubeip=$GKE_CLUSTER_NAME --region $GCP_REGION; done

As I use MacOS, the next step in the kubeip documentation didn’t work since sed -i is not supported in the FreeBSD version of sed which ships with MacOS. I just manually substituted, but this is the substitution:

sed -i "s/reserved/$GKE_CLUSTER_NAME/g" deploy/kubeip-configmap.yaml

sed -i "s/default-pool/$KUBEIP_NODEPOOL/g" deploy/kubeip-configmap.yaml

sed -i "s/pool-kubip/$KUBEIP_SELF_NODEPOOL/g" deploy/kubeip-deployment.yaml

However, that last line is redundant because we’ve tainted the ingress-pool so kubeip will naturally schedule on the web-pool node. The following lines in deploy/kubeip-deployment.yaml can be commented out:

# nodeSelector:

# cloud.google.com/gke-nodepool: web-pool

Now we just need to deploy it:

kubectl apply -f deploy/.

OK, sit back and watch kubeip do its thing with:

kubectl get nodes -w -o wide

The IP address of the ingress-pool node should be the static IP. Also useful is checking IP addresses in the cloud console: https://console.cloud.google.com/networking/addresses

Step 3: Deploy something to the cluster

We need some service in the cluster that we can proxy to in the next step. A simple static web site in nginx will do. Create an index.html file of your choice, e.g.:

<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="utf-8">

<title>My Website</title>

</head>

<body>

<h1>Hello world!</h1>

</body>

</html>

You’ll need a default.conf. Something like:

server {

listen 8080;

server_name my-cluster.co.uk;

root /usr/share/nginx/html;

location / {

try_files $uri $uri/ /index.html;

}

}

And a Dockerfile:

FROM nginx

COPY index.html /usr/share/nginx/html

COPY default.conf /etc/nginx/conf.d/

Build and push:

docker build -t gcr.io/my-gcp-project/website:1 .

docker push gcr.io/my-gcp-project/website:1

A basic k8s yaml to deploy with:

apiVersion: v1

kind: Service

metadata:

name: website

labels:

app: website

spec:

type: ClusterIP

ports:

- port: 8080

selector:

app: website

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: website

labels:

app: website

spec:

replicas: 1

selector:

matchLabels:

app: website

template:

metadata:

labels:

app: website

spec:

containers:

- name: website

image: 'gcr.io/my-cluster/website:1'

ports:

- containerPort: 8080

And kubectl apply it. You can check it’s running by port forwarding and going to http://localhost:8080. Something like:

kubectl port-forward website-74b66cf9d8-db69j 8080

Step 4: Deploy the nginx ingress proxy

Now to deploy the ingress reverse web proxy to the ingress-pool node. Some things to note about this pod:

- It needs to tolerate the taint on the ingress node, AND select the

ingress-pool. - It will use the host network so we can reach it without a cloud load balancer service. In fact we won’t be using a service at all.

- It needs a DNS policy of

ClusterFirstWithHostNetsince the pod is running withhostNetwork. Needed so we can proxy to services in the cluster.

First we need a config map for the nginx default.conf, e.g.:

apiVersion: v1

kind: ConfigMap

metadata:

name: ingress-proxy

default.conf: |

# This is needed to allow variables in proxy_pass directives, and bypass upstream startup checks

# Variables must use the service FQDN <service>.<namespace>.svc.cluster.local

resolver kube-dns.kube-system.svc.cluster.local valid=30s ipv6=off;

server {

listen 80;

listen [::]:80;

server_name my-cluster.co.uk;

return 301 https://$host$request_uri;

}

server {

listen 443 ssl;

server_name my-cluster.co.uk;

ssl_certificate /cert/fullchain.pem;

ssl_certificate_key /cert/privkey.pem;

ssl_protocols TLSv1.2;

ssl_ciphers HIGH:!aNULL:!MD5;

location / {

set $upstream "http://website.default.svc.cluster.local:8080";

proxy_pass $upstream;

proxy_set_header Host $host;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

proxy_set_header X-Forwarded-Proto $scheme;

}

# redirect server error pages to the static page /50x.html

#

error_page 500 502 503 504 /50x.html;

location = /50x.html {

root /usr/share/nginx/html;

}

}

And kubectl apply it. Note the above assumes you’ve created web server certs for my-cluster.co.uk and deployed to a k8s secret called web-certs. Also, that you’ve set a DNS A record for my-cluster.co.uk to point at the static IP address. Not covered here.

Now for the deployment:

kind: Deployment

metadata:

name: ingress-proxy

labels:

app: ingress-proxy

spec:

replicas: 1

selector:

matchLabels:

app: ingress-proxy

template:

metadata:

labels:

app: ingress-proxy

spec:

hostNetwork: true

dnsPolicy: ClusterFirstWithHostNet

nodeSelector:

cloud.google.com/gke-nodepool: ingress-pool

tolerations:

- key: dedicated

operator: Equal

value: ingress

effect: NoSchedule

containers:

- name: ingress-proxy

image: 'nginx'

ports:

- name: https

containerPort: 443

hostPort: 443

- name: http

containerPort: 80

hostPort: 80

volumeMounts:

- name: web-certs

mountPath: /cert

readOnly: true

- name: nginx-conf

mountPath: /etc/nginx/conf.d/default.conf

subPath: default.conf

readOnly: true

volumes:

- name: web-certs

secret:

secretName: web-certs

- configMap:

name: ingress-proxy

name: nginx-conf

And kubectl apply it.

Step 5: Open up the VPC firewall

There’s one last step to allow the ingress node to be reachable; the VPC firewall needs to be configured to allow http & https through:

gcloud compute firewall-rules create http-node-port --allow tcp:80

gcloud compute firewall-rules create tls-node-port --allow tcp:443

With any luck you should now have a nginx service proxying the website we created in the previous step, reachable via https://my-cluster.co.uk.

Conclusion

OK, that’s it. Hopefully that has been useful and you too can enjoy Kubernetes without breaking your bank account.

I’m sure there are improvements that can be made (e.g. making the ingress node pool preemptible - I don’t think there’s any reason why it couldn’t be) that brings the price down even further, or entirely different approaches. I’d love to hear any thoughts or ideas you have in the comments below.

References

- Kubernetes: The Surprisingly Affordable Platform for Personal Projects

- How to: Kubernetes for Cheap on Google Cloud

- Running a cheap GKE cluster with public ingress & zero load balancers

- Affordable Kubernetes Cluster

- Creating an Affordable Kubernetes Cluster

- Kubernetes on the cheap